The work marks a beginning in using machine learning techniques to optimize the architecture of chips.

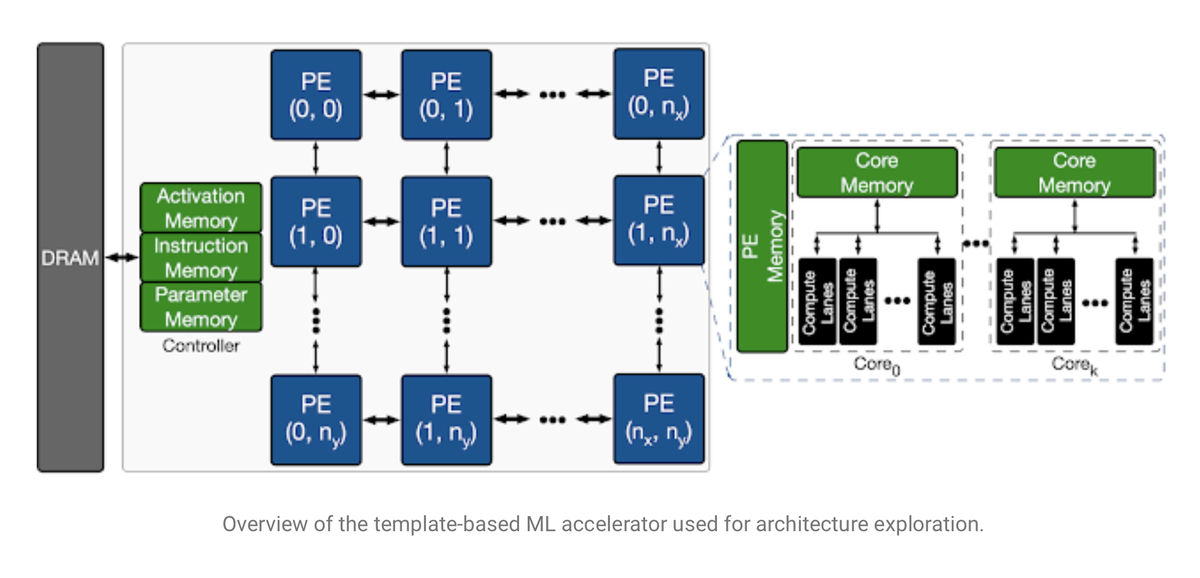

The so-called search space of an accelerator chip for artificial intelligence, meaning, the functional blocks that the chip’s architecture must optimize for. Characteristic to many AI chips are parallel, identical processor elements for masses of simple math operations, here called a “PE,” for doing lots of vector-matrix multiplications that are the workhorse of neural net processing.

This month, Google unveiled to the world one of those research projects, called Apollo, in a paper posted on the arXiv file server, “Apollo: Transferable Architecture Exploration,” and a companion blog post by lead author Amir Yazdanbakhsh.

Apollo represents an intriguing development that moves past what Dean hinted at in his formal address a year ago at the International Solid-State Circuits Conference.

Apollo represents an intriguing development that moves past what Dean hinted at in his formal address a year ago at the International Solid-State Circuits Conference.

In the example Dean gave at the time, machine learning could be used for some low-level design decisions, known as “place and route.” In place and route, chip designers use software to determine the circuits’ layout that forms the chip’s operations, analogous to designing the floor plan.

In Apollo, by contrast, rather than a floor plan, the program is performing what Yazdanbakhsh and colleagues call “architecture exploration.”

The architecture for a chip is designing a chip’s functional elements, how they interact, and how software programmers should gain access to those available elements. For example, a classic Intel x86 processor has a certain amount of on-chip memory, a dedicated arithmetic-logic unit, and several registers, among other things. The way those parts are put together gives the so-called Intel architecture its meaning.

Asked about Dean’s description, Yazdanbakhsh said, “I would see our work and place-and-route project orthogonal and complementary.

“Architecture exploration is much higher-level than place-and-route in the computing stack,” explained Yazdanbakhsh, referring to a presentation by Cornell University’s Christopher Batten.

“I believe it [architecture exploration] is where a higher margin for performance improvement exists,” said Yazdanbakhsh.

Yazdanbakhsh and colleagues call Apollo the “first transferable architecture exploration infrastructure,” the first program that gets better at exploring possible chip architectures the more it works on different chips, thus transferring what is learned to each new task.

The chips that Yazdanbakhsh and the team are developing are themselves chips for AI, known as accelerators. This is the same class of chips like the Nvidia A100 “Ampere” GPUs, the Cerebras Systems WSE chip, and many other startup parts currently hitting the market. Hence, an excellent symmetry, using AI to design chips to run AI.

Given that the task is to design an AI chip, the architectures that the Apollo program is exploring are architectures suited to running neural networks. And that means lots of linear algebra, lots of simple mathematical units that perform matrix multiplications and sum the results.

The team defines the challenge as finding the right mix of those math blocks to suit a given AI task. They chose a relatively simple AI task, a convolutional neural network called MobileNet, a resource-efficient network introduced in 2017 by Andrew G. Howard and colleagues at Google. Also, they tested workloads using several internally-designed networks for tasks such as object detection and semantic segmentation.

In this way, the goal becomes, What are the right parameters for the architecture of a chip such that for a given neural network task, the chip meets specific criteria such as speed?

The search involved sorting through over 452 million parameters, including how many of the math units, called processor elements, would be used and how much parameter memory and activation memory would be optimal for a given model.

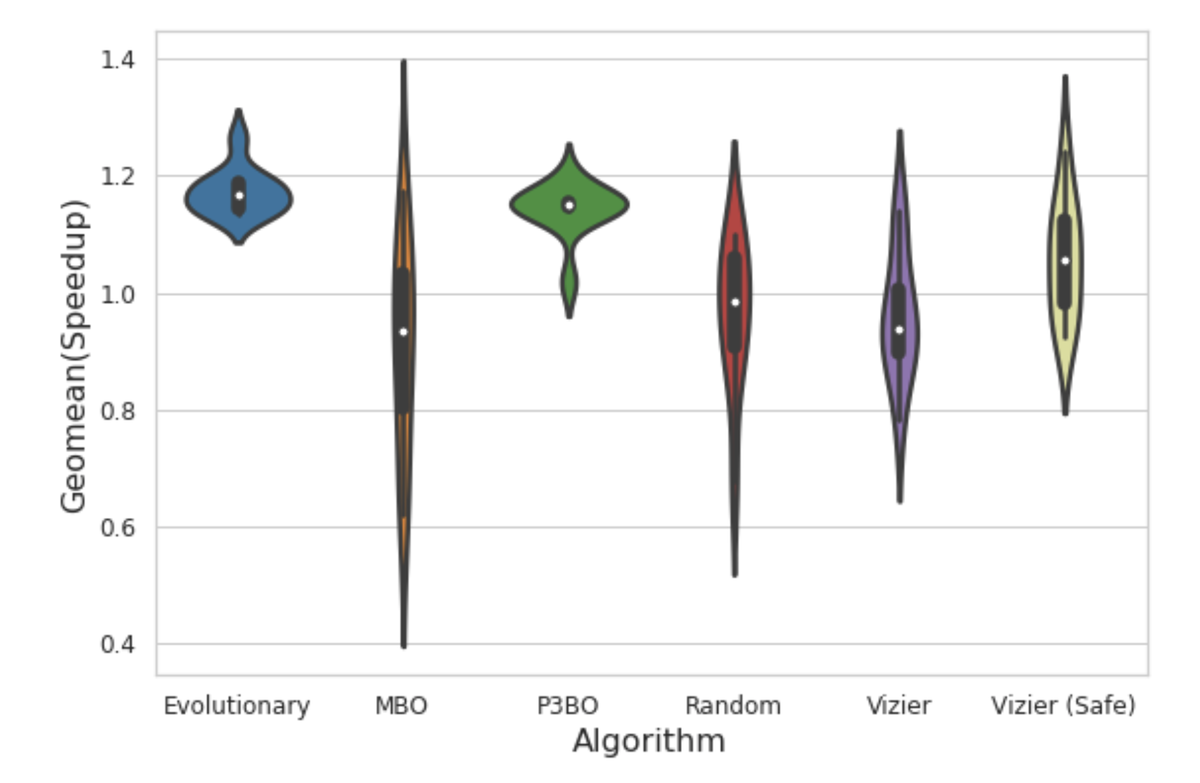

The virtue of Apollo is to put a variety of existing optimization methods head to head, to see how they stack up in optimizing the architecture of a novel chip design. Here, violin plots show the relative results.

The virtue of Apollo is to put a variety of existing optimization methods head to head, to see how they stack up in optimizing the architecture of a novel chip design. Here, violin plots show the relative results.

Apollo is a framework, meaning that it can take a variety of methods developed in the literature for so-called black-box optimisation and it can adapt those methods to the particular workloads and compare how each way does in terms of solving the goal.

In yet another excellent symmetry, Yazdanbakhsh employs some optimisation methods designed to develop neural net architectures. They include the so-called evolutionary approaches developed by Quoc V. Le and colleagues at Google in 2019; model-based reinforcement learning and so-called population-based ensembles of techniques, developed by Christof Angermueller others at Google for “designing” DNA sequences; and a Bayesian optimisation approach.

Hence, Apollo contains primary levels of pleasing symmetry, bringing together approaches designed for neural network design and biological synthesis to design circuits that might, in turn, be used for neural network design and natural synthesis.

All of these optimisations are compared, which is where the Apollo framework shines. Its entire raison d’être is to run different approaches methodically and tell what works best. The Apollo trials detail how the evolutionary and model-based methods can be superior to random selection and other systems.

But the most striking finding of Apollo is how running these optimisation methods can make for a much more efficient process than brute-force search. They compared, for example, the population-based approach of ensembles against what they call a semi-exhaustive search of the solution set of architecture approaches.

Yazdanbakhsh and colleagues saw that a population-based approach could discover solutions that make use of trade-offs in the circuits, such as compute versus memory, that would ordinarily require domain-specific knowledge. Because the population-based approach is a learned approach, it finds solutions beyond the reach of the semi-exhaustive search:

P3BO [population-based black-box optimisation] finds a design slightly better than semi-exhaustive with 3K-sample search space. We observe that the method uses a tiny memory size (3MB), favouring more compute units. This leverages the compute-intensive nature of vision workloads, which was not included in the original semi-exhaustive search space. This demonstrates the need for manual search space engineering for semi-exhaustive approaches, whereas learning-based optimisation methods leverage large search spaces to reduce manual effort.

So, Apollo can figure out how well different optimisation approaches will fare in chip design. However, it does something more, which is that it can run what’s called transfer learning to show how those optimisation approaches can, in turn, be improved.

By running the optimisation strategies to improve a chip by one design point, such as the maximum chip size in millimetres, the outcome of those experiments can then be fed to a subsequent optimisation method as inputs. The Apollo team found that various optimisation methods improve their performance on a task like area-constrained circuit design by leveraging the best results of the initial or seed optimisation method.

All of this has to be bracketed by the fact that designing chips for MobileNet or any other network or workload is bounded by the design process’s applicability to a given workload.

One of the authors, Berkin Akin, who helped develop a version of MobileNet, MobileNet Edge, has pointed out that optimisation is a product of both chip and neural network optimisation.

“Neural network architectures must be aware of the target hardware architecture to optimise the overall system performance and energy efficiency,” wrote Akin last year in a paper with colleague Suyog Gupta.

How valuable is hardware design when isolated from the creation of the neural net architecture?

“Great question,” Akin replied in an email. “I think it depends.” Said Akin, Apollo may be sufficient for given workloads, but what’s called co-optimisation, between chips and neural networks, will yield other benefits down the road.